Inverse kinematics is the opposite of forward kinematics. This is when you have a desired end effector position, but need to know the joint angles required to achieve it. The robot sees a kitten and wants to grab it, what angles should each joint go to? Although way more useful than forward kinematics, this calculation is much more complicated too. As such, I will not show you how to derive the equation based on your robot arm configuration.

Instead, I will just give you the equations for our specific robot design:

So what makes inverse kinematics so hard? Well, other than the fact that it involves non-linear simultaneous equations, there are other reasons too.

First, there is the very likely possibility of multiple, sometimes infinite, number of solutions (as shown below). How would your arm choose which is optimal, based on torques, previous arm position, gripping angle, etc.?

There is the possibility of zero solutions. Maybe the location is outside the workspace, or maybe the point within the workspace must be gripped at an impossible angle.

Singularities, a place of infinite acceleration, can blow up equations and/or leave motors lagging behind (motors cant achieve infinite acceleration).

And lastly, exponential equations take forever to calculate on a microcontroller. No point in having advanced equations on a processor that cant keep up.

Too lazy to calculate inverse kinematics yourself?

Check out my Robot Arm Designer v1 in excel.

Motion Planning

Motion planning on a robot arm is fairly complex so I will just give you the basics.

Suppose your robot arm has objects within its workspace, how does the arm move through the workspace to reach a certain point? To do this, assume your robot arm is just a simple mobile robot navigating in 3D space. The end effector will traverse the space just like a mobile robot, except now it must also make sure the other joints and links do not collide with anything too. This is extremely difficult to do . . .

What if you want your robot end effector to draw straight lines with a pencil? Getting it to go from point A to point B in a straight line is relatively simple to solve. What your robot should do, by using inverse kinematics, is go to many points between point A and point B. The final motion will come out as a smooth straight line. You can not only do this method with straight lines, but curved ones too. On expensive professional robotic arms all you need to do is program two points, and tell the robot how to go between the two points (straight line, fast as possible, etc.). For further reading, you could use the wavefront algorithm to plan this two point trajectory.

Velocity (and more Motion Planning)

Calculating end effector velocity is mathematically complex, so I will go only into the basics. The simplest way to do it is assume your robot arm (held straight out) is a rotating wheel of L diameter. The joint rotates at Y rpm, so therefore the velocity is

However the end effector does not just rotate about the base, but can go in many directions. The end effector can follow a straight line, or curve, etc.

With robot arms, the quickest way between two points is often not a straight line. If two joints have two different motors, or carry different loads, then max velocity can vary between them. When you tell the end effector to go from one point to the next, you have two decisions. Have it follow a straight line between both points, or tell all the joints to go as fast as possible - leaving the end effector to possibly swing wildly between those points.

In the image below the end effector of the robot arm is moving from the blue point to the red point. In the top example, the end effector travels a straight line. This is the only possible motion this arm can perform to travel a straight line. In the bottom example, the arm is told to get to the red point as fast as possible. Given many different trajectories, the arm goes the method that allows the joints to rotate the fastest.

Which method is better? There are many deciding factors. Usually you want straight lines when the object the arm moves is really heavy, as it requires the momentum change for movement (momentum = mass * velocity). But for maximum speed (perhaps the arm isn't carrying anything, or just light objects) you would want maximum joint speeds.

Now suppose you want your robot arm to operate at a certain rotational velocity, how much torque would a joint need? First, lets go back to our FBD:

Now lets suppose you want joint J0 to rotate 180 degrees in under 2 seconds, what torque does the J0 motor need? Well, J0 is not affected by gravity, so all we need to consider is momentum and inertia. Putting this in equation form we get this:

torque = moment_of_inertia * angular_acceleration

breaking that equation into sub components we get:

torque = (mass * distance^2) * (change_in_angular_velocity / change_in_time)

and

change_in_angular_velocity = (angular_velocity1)-(angular_velocity0)

angular_velocity = change_in_angle / change_in_time

Now assuming at start time 0 that angular_velocity0 is zero, we get

torque = (mass * distance^2) * (angular_velocity / change_in_time)

where distance is defined as the distance from the rotation axis to the center of mass of the arm:

center of mass of the arm = distance = 1/2 * (arm_length)

(use arm mass)

but you also need to account for the object your arm holds:

center of mass of the object = distance = arm_length

(use object mass)

So then calculate torque for both the arm and then again for the object, then add the two torques together for the total:

torque(of_object) + torque(of_arm) = torque(for_motor)

And of course, if J0 was additionally affected by gravity, add the torque required to lift the arm to the torque required to reach the velocity you need. To avoid doing this by hand, just use the robot arm calculator.

But it gets harder . . . the above equation is for rotational motion and not for straight line motions. Look up something called a Jacobian if you enjoy mathematical pain =P

Another Video!

In order to better understand robot arm dynamics, we had a robot arm bowling competition using the same DENSO 6DOF robot arms as in the clocks video.

Each team programs an arm to do two tasks:

- Try to place all three of its pegs in the opponents' goal

- Block opponent pegs from going in your own goal

Arm Sagging

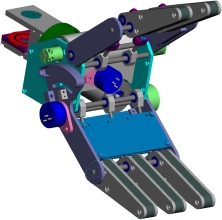

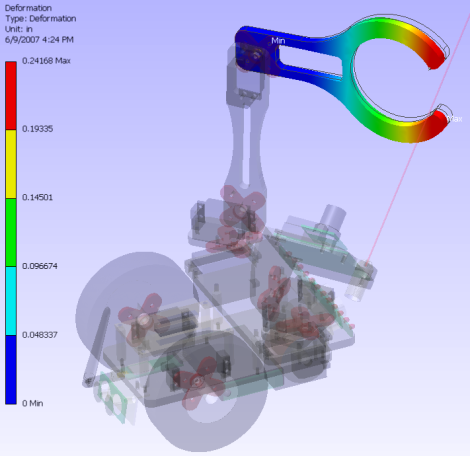

Arm sagging is a common affliction of badly designed robot arms. This is when an arm is too long and heavy, bending when outwardly stretched. When designing your arm, make sure the arm is reinforced and lightweight. Do a finite element analysis to determine bending deflection/stress such as I did on my ERP robot:

Keep the heaviest components, such as motors, as close to the robot arm base as possible. It might be a good idea for the middle arm joint to be chain/belt driven by a motor located at the base (to keep the heavy motor on the base and off the arm).

Keep the heaviest components, such as motors, as close to the robot arm base as possible. It might be a good idea for the middle arm joint to be chain/belt driven by a motor located at the base (to keep the heavy motor on the base and off the arm). The sagging problem is even worse when the arm wobbles between stop-start motions. The solve this, implement a PID controller so as to slow the arm down before it makes a full stop.

Sensing

Most robot arms only have internal sensors, such as encoders. But for good reasons you may want to add additional sensors, such as video, touch, haptic, etc.

A robot arm without video sensing is like an artist painting with his eyes closed. Using basic visual feedback algorithms, a robot arm could go from point to point on its own without a list of preprogrammed positions. Giving the arm a red ball, it could actually reach for it (visual tracking and servoing). If the arm can locate a position in X-Y space of an image, it could then direct the end effector to go to that same X-Y location (by using inverse kinematics). If you are interested in learning more about the vision aspect of visual servoing, please read the Computer Vision Tutorials for more information.

Haptic sensing is a little different in that there is a human in the loop. The human controls the robot arm movements remotely. This could be done by wearing a special glove, or by operating a miniature model with position sensors. Robotic arms for amputees are doing a form of haptic sensing. Also to note, some robot arms have feed back sensors (such as touch) that gets directed back to the human (vibrating the glove, locking model joints, etc.).

Tactile sensing (sensing by touch) usually involves force feedback sensors and current sensors. These sensors detect collisions by detecting unexpected force/current spikes, meaning a collision has occurred. A robot end effector can detect a successful grasp, and not grasp too tight or too lightly, just by measuring force. Another method would be to use current limiters - sudden large current draws generally mean a collision/contact has occurred. An arm could also adjust end effector velocity by knowing if it is carrying a heavy object or a light object - perhaps even identify the object by its weight.

Try this. Close your eyes, and put both of your hands in your lap. Now keeping your eyes closed, move your hand slowly to reach for your computer mouse. Do it!!!! You will see why soon . . . Now what will happen is that your hand will partially miss, but at least one of your fingers will touch the mouse. After that finger touches, your hand will suddenly re-adjust its position because it now knows exactly where that mouse is. This is the benefit of tactile sensing - no precision encoders required for perfect contact!

End Effector Design

In the future I will write a separate tutorial on how to design robot grippers, as it will require many more pages of material.

In the meantime, you might be interested in reading the tutorial for calculating friction and force for robot end effectors.

I also went in to some detail describing my robot arm card dealing gripper.

Anyway, I hope you have enjoyed this robot arm tutorial!